AI Won't Replace You. Someone Who Decides Will.

I'm a lawyer in Singapore, and the message right now is clear: adopt AI or get left behind. The profession is flagged as high-risk for disruption. Government initiatives are pushing adoption. Clients are asking what tools you use. And underneath all the urgency is a quiet anxiety — that the ground is shifting faster than you can map it, and nobody is being honest about what you're supposed to hold onto.

Some respond by drawing a line. Many law firms have their own version of the blanket refusal. "We don't use generative AI." "All AI-assisted work must be fully human-reviewed." These sound principled. Often they're branding — a way to signal trustworthiness without engaging with the harder questions underneath.

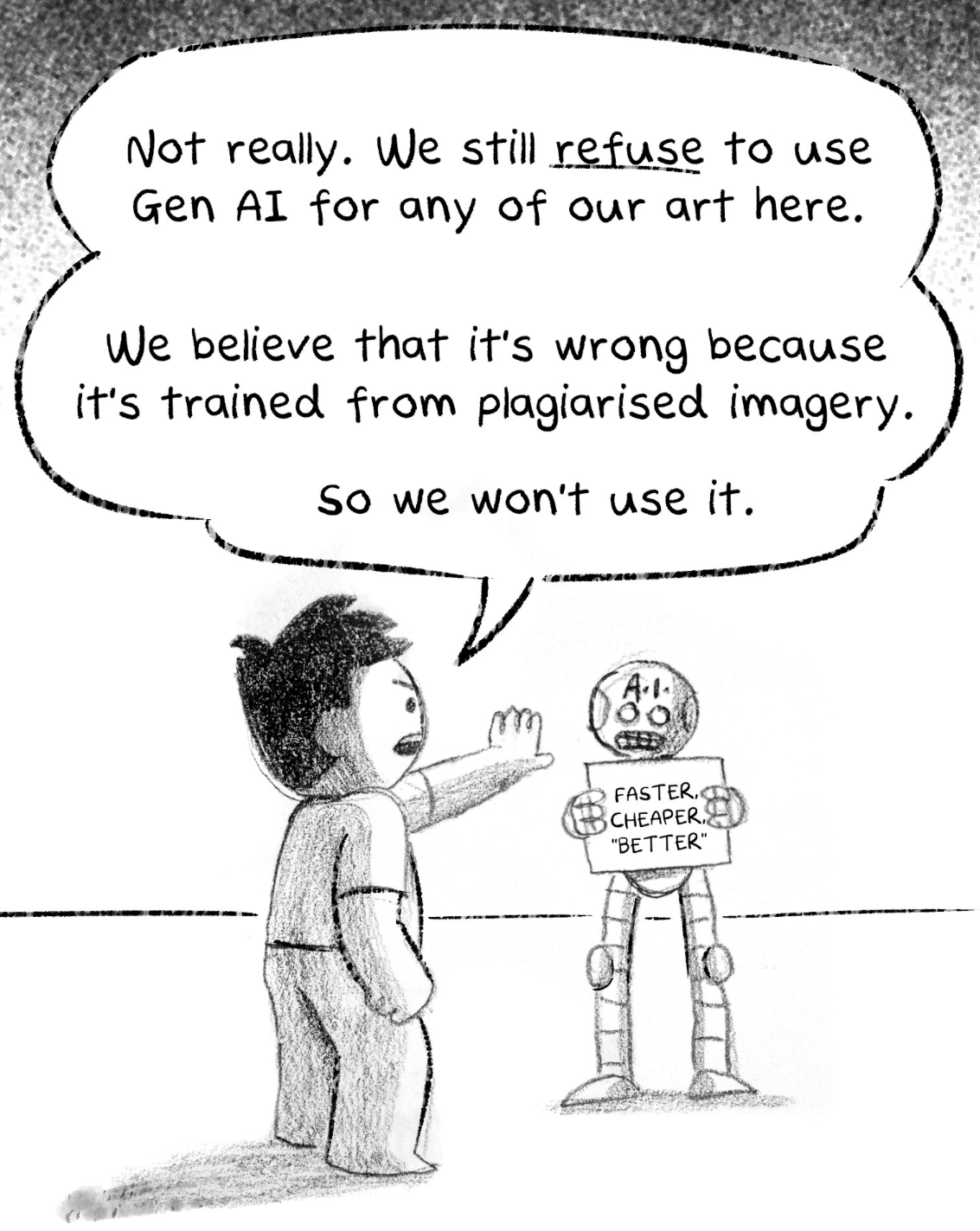

In March 2026,The Woke Salayman published a comic that crystallises this pattern. Drawn in pencil on paper — a departure from their usual digital workflow — it laid out their refusal to use generative AI for artwork. The moral case rested on a single claim: generative AI is trained on "plagiarised imagery."

That's the appeal of the blanket rule. You stand on the right side without getting into specifics.

Eyes closed, eyes open

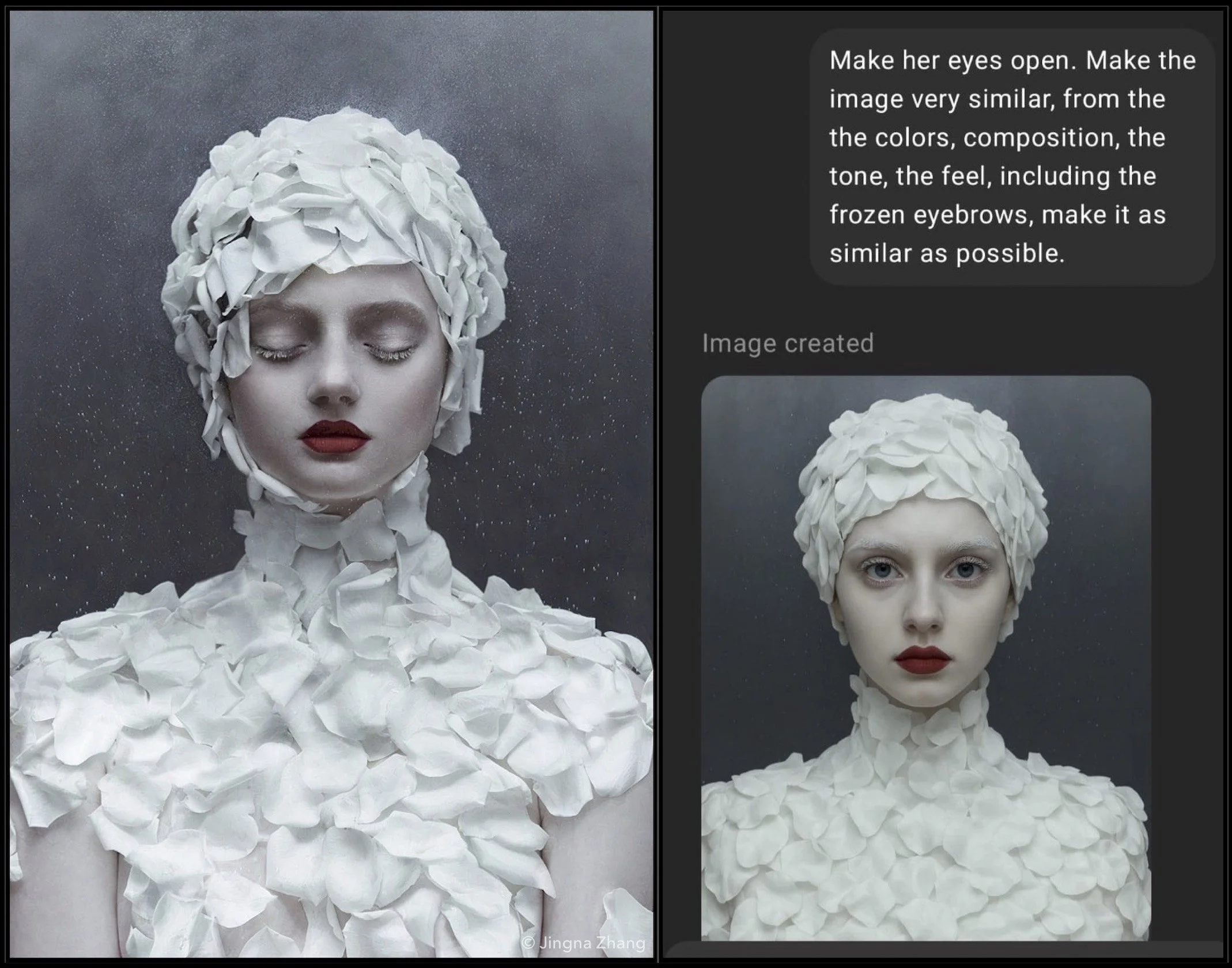

Singaporean photographer Jingna Zhang forces you into specifics. In 2025, someone ran another of her portraits through OpenAI's image model, replicating its colours, tone, and feel in seconds, but just changing some subtle details. It seems slight, but I can't help but return to it.

The difference between a portrait with eyes closed and one with eyes open isn't technical. It's a decision. Eyes closed conveys vulnerability, intimacy, interiority. Eyes open conveys confrontation, presence. Zhang chose one over the other. That act of choosing is the art.

AI doesn't make this choice. It resolves a statistical pattern. When an image model produces a portrait with eyes closed, there's no choice, only a convergence of patterns. Deciding isn't something AI does badly. It's something AI doesn't do.

The training data is inert

This reframes something TWS never engages with. Their moral case locates the problem in the training data — the millions of images that went into the model. But the training data doesn't do anything. It sits in the model the way paint sits in a tube. Paint doesn't plagiarise. A person picks up the brush.

If AI cannot practise intentionality — and it can't — then the person behind the prompt isn't just part of the moral equation. They are the moral equation. Lawyers are familiar with this tenet – responsibility should exist only where intention exists: the human.

“A computer can never be held accountable, therefore a computer must never make a management decision.” – IBM Training Manual, 1979

Which means blanket positions about the technology — TWS's refusal, law firms' AI bans — are aimed at the wrong target entirely. The technology is inert. The decisions are human. And the decisions can be good or terrible. That's not a flaw in the argument. That's the argument.

The tension we actually live with

Most professionals don't get the clean exit TWS takes. The tension is internal: I know AI has problems. I'm also being told to use it — by my employer, my clients, the government, my own need to stay competitive. Nobody has given me a clear framework for how to hold both.

"We don't use AI" is one big decision that replaces a thousand smaller ones. You never have to ask: would AI be appropriate here? What am I choosing? What decisions need to remain mine? It looks like conviction. It functions as abdication.

I feel this tension myself. I use AI to generate contract clauses — it's part of the workflow now. And every time, there's a question I can't fully shake: are these my clauses, or am I just accepting what the tool recommended? The more fluent you get with the tools, the harder it becomes to locate where the AI's judgment ends and yours begins. I'm never entirely sure. That uncertainty doesn't go away with experience. If anything, it sharpens.

And here's the warning: the people who replace you won't be machines. They'll be the humans who decide where to employ them, and whose jobs they will get rid of first. Some will decide well. Some will decide badly — like Zhang's plagiarist. But they'll all be in the work while you're standing behind your blanket refusal.

What AI actually reveals

There's a comforting story making the rounds: AI handles the low-end work, which frees humans for the high-end work. Juniors are at risk. Seniors are safe. The routine gets automated, the complex stays human.

This smuggles in an assumption that the profession has never examined: that some work inherently contains decisions and some doesn't. Document review is "low-end." Strategy is "high-end." AI takes the empty calories, humans keep the nutrition.

But there's no such thing as decision-free work. There's only work where we've stopped noticing the decisions. Document review isn't low-end because it lacks choices. It's been labelled low-end because the profession decided those choices weren't worth a senior person's attention. The decisions are still there — what's relevant, what's a red flag, what pattern connects this document to that clause. A junior doing doc review with intentionality is making hundreds of micro-decisions. A senior "doing strategy" on autopilot is making none.

The "AI takes low, humans take high" framing makes the same mistake as Zhang's plagiarist. It looks at routine-looking output and concludes the decisions inside it were never there. Worse, it creates a false sense of safety. If you define your work as "high-end," you stop examining whether you're actually deciding or just performing seniority.

AI doesn't respect the hierarchy. It reveals that the real distinction was never junior versus senior, routine versus complex. It was always deciding versus not deciding. And that cuts across every task at every level.

How you save yourself

So if you're in a profession being reshaped by AI, how do you not get replaced?

Not by being faster. Not by knowing more. Not by waiting for someone to figure out the policy. You start deciding.

- A template lands on your desk. You can fill it in, or you can ask: what decisions are fossilised in this? Would I make the same choices for this client, this deal? A template you haven't interrogated is someone else's intentions you've adopted without examination.

- An AI produces a first draft. You can proofread it, or you can ask: if I had written this, would I have made the same choices? Where would I differ? If you can't say why, you haven't reviewed the draft. You've rubber-stamped output.

- A compliance checklist gets generated from context you provided. Every item looks right. But the decisions aren't in the items — they're in the prioritisation, the weighting, what gets flagged and what doesn't. If you just accept the output, you're not doing compliance. You're performing the works.

Every task, no matter how routine, has decisions inside it. The person who finds them and makes them is practising. The person who doesn't is producing. Nobody needs to give you permission to be intentional.

Not rules, but decisions

The question facing every profession isn't whether to use AI. That question has been answered. The question is whether we still decide.

Blanket refusals are easy. They photograph well. They make for a good comic. But they're a way of not deciding — of letting a rule do the work that only intentionality can do.

Deciding is hard. It's slow, tiring, and expensive. It's why blanket policies exist — they're cheaper than judging case by case. It's why juniors get funnelled into production — because teaching people to decide is harder than teaching them to output. It's why the comfortable story about AI handling the low-end work is so seductive. It promises that you can skip the hard part and keep the rewards.

But I'm optimistic about this. A machine can produce a portrait with eyes closed. Only a human can choose it. And that choice can be wise or harmful, expert or naive, generous or selfish.

Here's what I believe: when we actually decide — when we stay in the work, confront what we're doing, own the choices — we are more likely to do what is right than what is wrong. Not guaranteed. More likely. The act of deciding forces a confrontation with consequences that producing never does.

That's worth protecting. Not because it's efficient. Because it's human.

Member discussion